Eric Wong

@riceric22

Assistant professor at University of Pennsylvania. Machine learning, optimization, robustness & interpretability.

profericwong.bsky.social

ID: 53464710

https://www.cis.upenn.edu/~exwong/ 03-07-2009 18:48:34

186 Tweet

1,1K Followers

110 Following

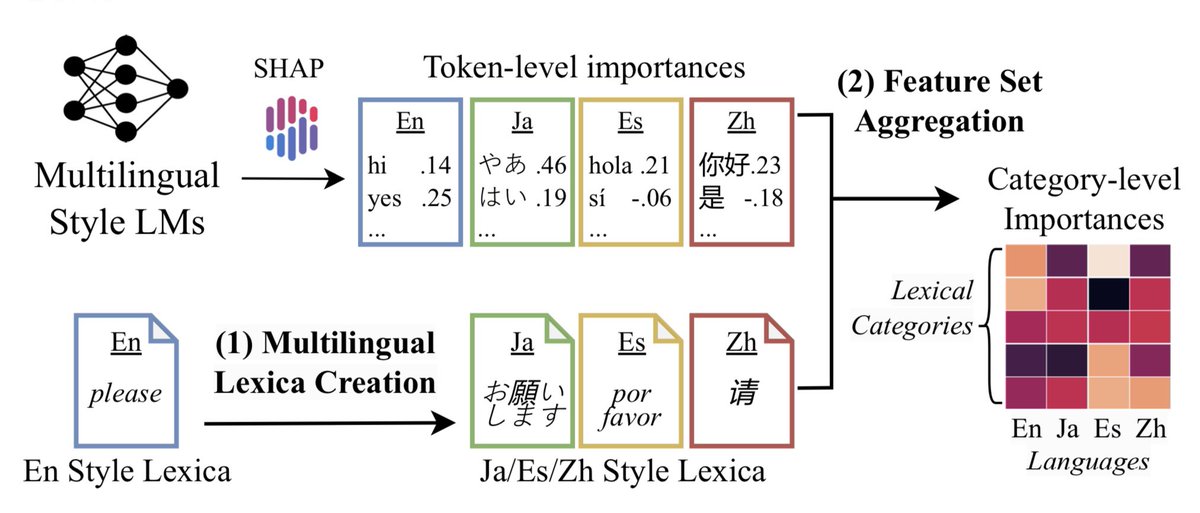

I'm attending SoCal NLP Symposium on Friday to present our work on the lack of cultural awareness in multilingual LMs 🌎 We collaborate w/ psychologists and show LMs don't understand cultural nuances in emotion. This work won #bestpaper at ACL’s WASSA 2026! Paper: aclanthology.org/2023.wassa-1.1…

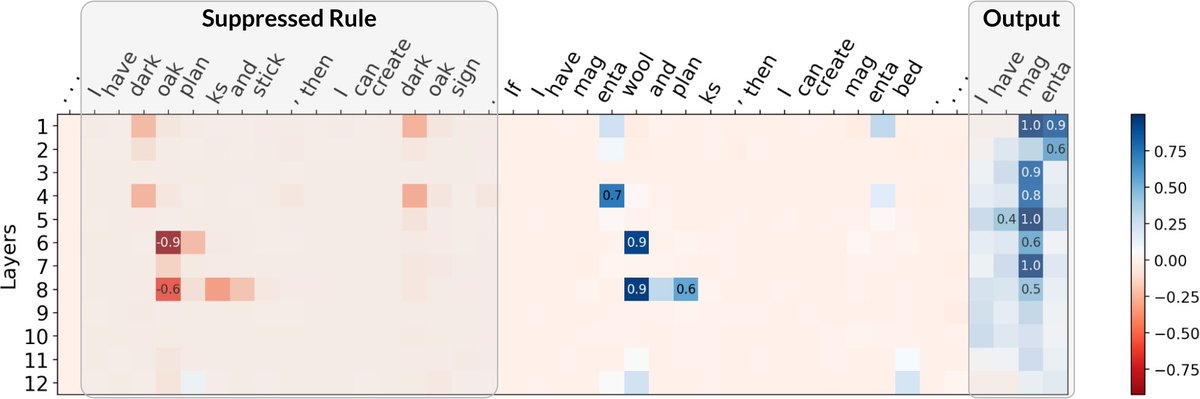

I will be presenting our paper on stability guarantees for feature attributions at NeurIPS Conference on Tuesday (Dec 12) at 10:45 am CST! Poster: Great Hall & Hall B1+B2 (level 1) #1625 arXiv: arxiv.org/abs/2307.05902 blog post: debugml.github.io/multiplicative… (1/3)

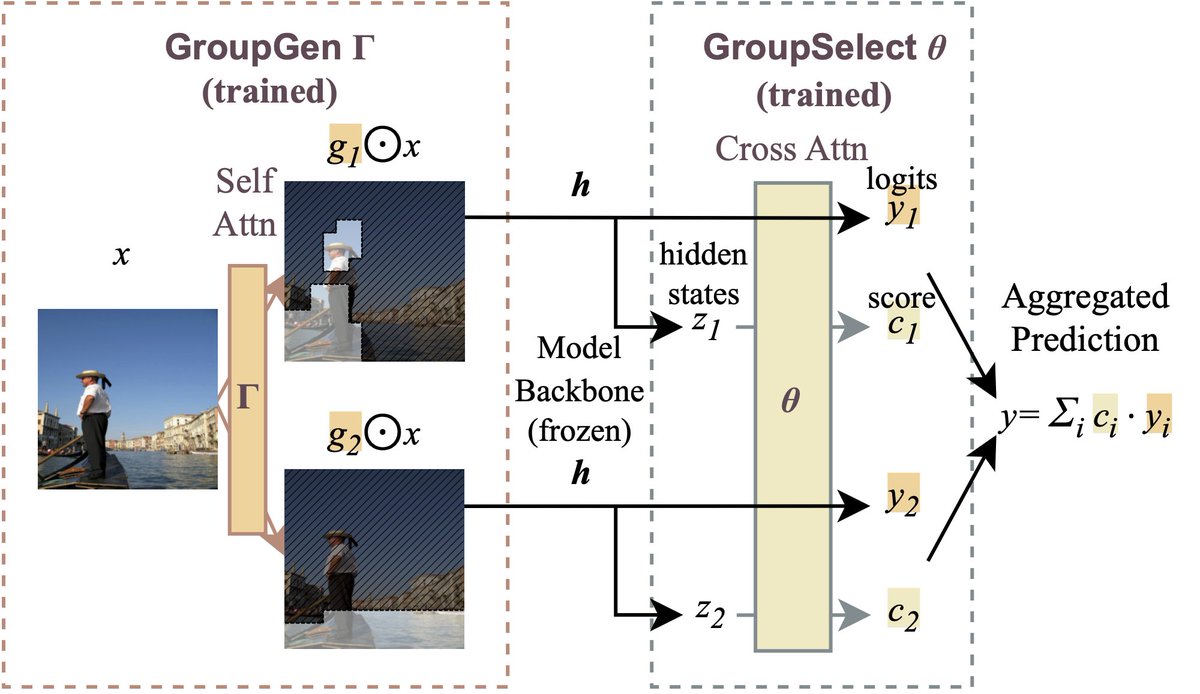

I will be presenting our work on creating faithful groups of features for attribution at XAI_in_Action_Workshop workshop NeurIPS Conference today (Dec 16) at 4:30pm-5:30pm arxiv: arxiv.org/abs/2310.16316 blog: debugml.github.io/sum-of-parts Come to our poster for a chat!

Why do SAM segments look nice yet perform poorly in downstream tasks? We've been studying evaluation metrics for feature groups that pinpoint the underlying issues. Stop by our #ICLR2024 poster today at 10:45am (#323 Hall B), or Chaehyeon Kim's talk on Friday at 1:15pm (Hall A3).

📢 We're back with a new edition, this year at NeurIPS Conference in Vancouver! Paper deadline is August 30th, we are looking forward to your submissions!