Amit Sangani

@asangani7

Senior Director of Partner Eng @Meta. Prev CTO @MightyText, Ex-@Google. AI/ML, Llama, PyTorch and Gen AI developer & enthusiast.

ID: 121887943

https://llama.meta.com/ 10-03-2010 23:00:22

858 Tweet

1,1K Followers

632 Following

📣 New course now available on DeepLearning.AI: Introducing Multimodal Llama 3.2! The course covers both Llama 3.1 & Llama 3.2 and includes detailed rundowns on multimodal prompting, custom tool calling, Llama Stack + more. Take the 1h course for free ⬇️ bit.ly/3ZU80Ik

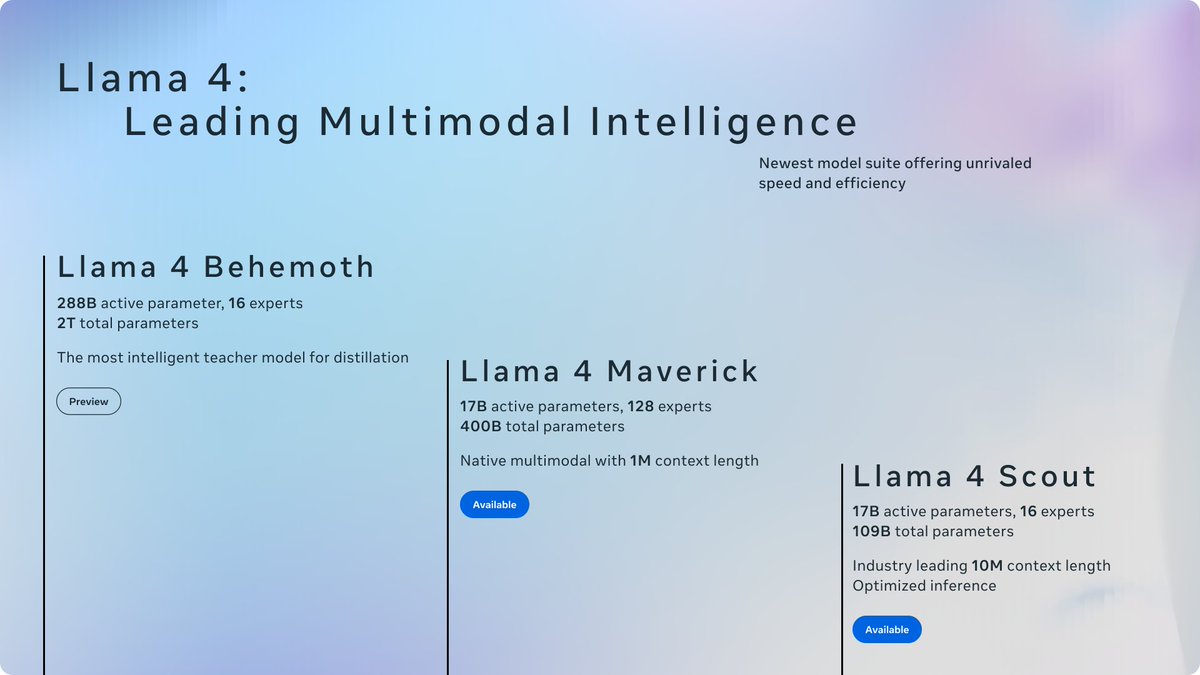

Introducing "Building with Llama 4." This short course is created with Meta AI at Meta, and taught by Amit Sangani, Director of Partner Engineering for Meta’s AI team. Meta’s new Llama 4 has added three new models and introduced the Mixture-of-Experts (MoE) architecture to its